What Is Predicate Pushdown?

Predicate pushdown is the query optimization technique of moving SQL filter conditions (predicates) as far down the data access chain as possible — ideally into the metadata layer or the storage reader itself — so that irrelevant data is never read into engine memory at all. It is the foundational optimization that makes analytical queries on large-scale data lakehouses practical.

Without predicate pushdown, a query like SELECT * FROM orders WHERE order_date = '2026-01-01' would read all 10TB of historical order data into memory and filter it there — an obviously wasteful approach. With predicate pushdown, the storage reader identifies which files contain January 1st data (using partition metadata and file statistics) and reads only those files — perhaps 27GB instead of 10TB.

The term 'pushdown' refers to the direction of optimization: filter conditions are 'pushed down' from the query plan (which operates at a high, logical level) through multiple layers toward the storage system (which operates at a low, physical level). The further down the filter is pushed, the less data must be read.

Predicate Pushdown in Apache Iceberg

Apache Iceberg's three-level metadata architecture creates three distinct opportunities for predicate pushdown:

Level 1: Manifest List Pruning

The manifest list contains partition-level summary statistics for each manifest file. The query engine applies predicates to these summaries, eliminating entire manifest files that cannot contain relevant rows. A table with 1000 manifest files may have 990 eliminated at this level — reducing subsequent metadata reads by 99%.

Level 2: Manifest File Pruning (Data Skipping)

Each manifest file contains per-data-file column statistics (min/max values, null counts). The engine applies predicates to these per-file statistics, eliminating data files that cannot contain rows satisfying the filter. A partition's 100 data files may be reduced to 3 relevant files at this level.

Level 3: Parquet Row Group Pruning

Within each data file, Parquet stores per-row-group column statistics. The engine applies predicates within the file, eliminating row groups (typically 128MB each) that cannot contain relevant rows. A 500MB file with 4 row groups may result in only 1 row group being read.

Partition Pruning as Predicate Pushdown

Partition pruning is the most commonly discussed form of predicate pushdown. In Apache Iceberg with hidden partitioning, partition pruning works transparently: a query with WHERE event_date = '2026-01-15' triggers Iceberg's partition transform evaluation (days(event_time) for the 2026-01-15 day value) and eliminates all manifest files and data files in other day partitions. No data from other days is read.

The power of Iceberg's partition pruning relative to Hive-style partitioning is that it works automatically from predicates on the original data columns — users do not need to know or filter on a synthetic partition column. The engine applies the partition transform to convert the data column predicate into a partition value predicate transparently.

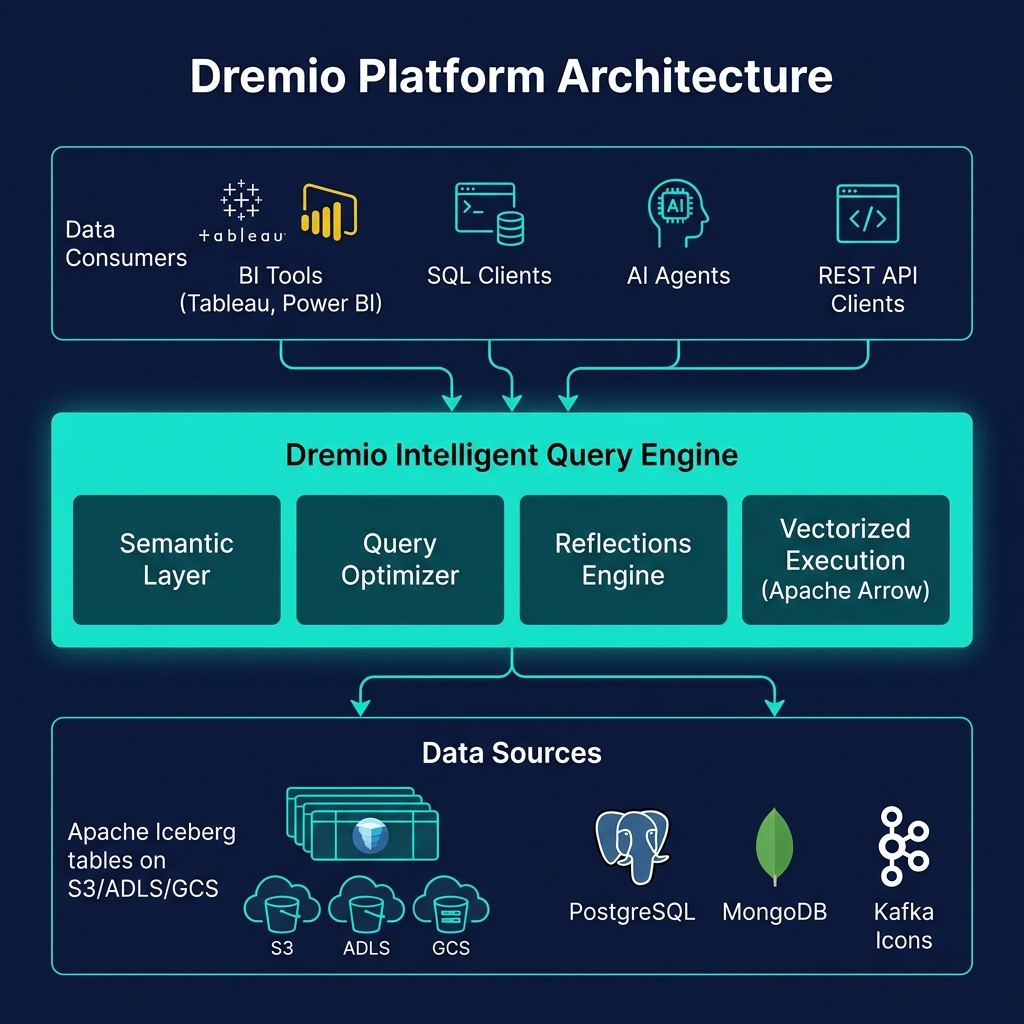

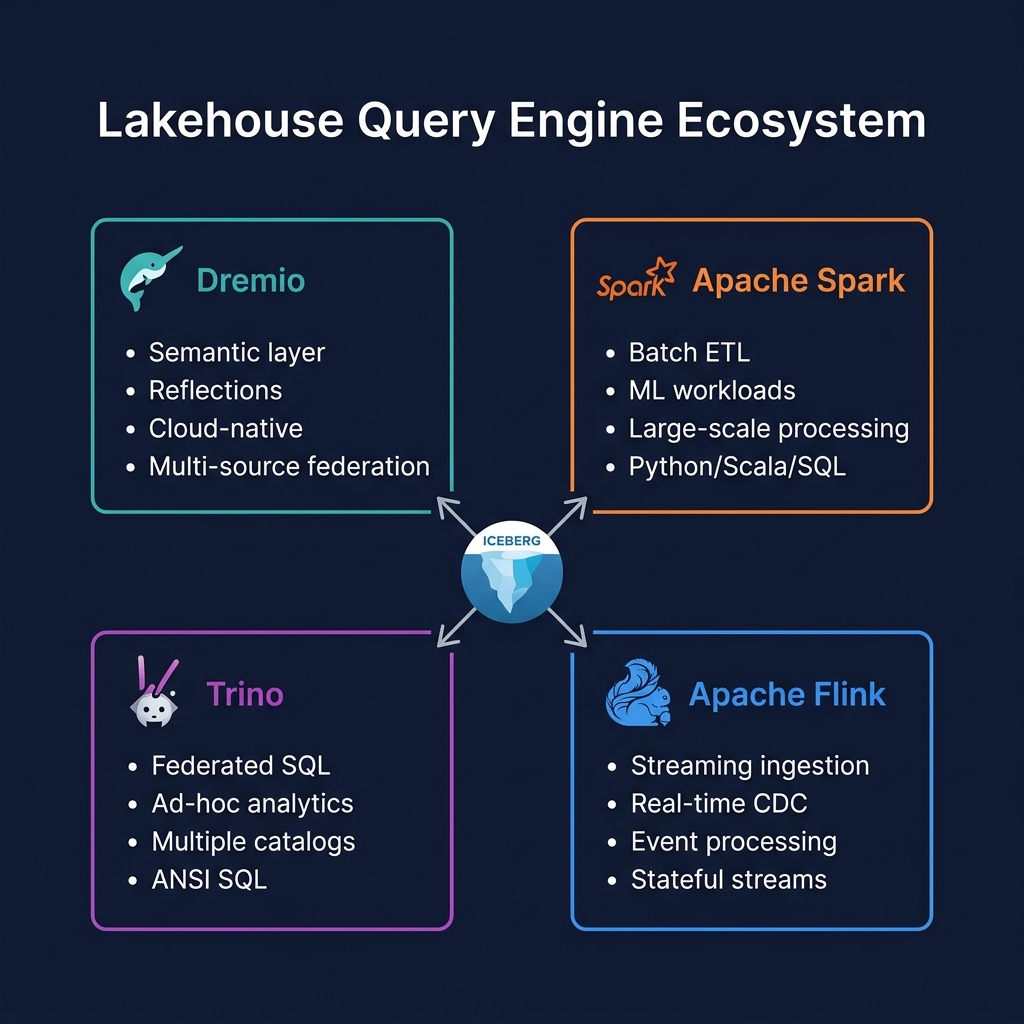

Predicate Pushdown to Federated Sources

In federated query scenarios — where Dremio or Trino queries a PostgreSQL table alongside Iceberg tables — predicate pushdown extends to the source database. The engine pushes filter predicates from the SQL WHERE clause into the SQL query sent to PostgreSQL, ensuring that the database applies the filter before transmitting results over the network.

Without predicate pushdown to PostgreSQL, the engine would request all rows from the database table and filter them locally — unnecessarily transferring potentially millions of rows over the network. With pushdown, only matching rows are returned from PostgreSQL, minimizing network transfer and database load.

Summary

Predicate pushdown is the single most impactful query optimization technique in the data lakehouse. By pushing filter conditions into Iceberg's metadata hierarchy and Parquet's file statistics, it eliminates the vast majority of data from consideration before any bytes are read from storage — transforming what would be full-table scans into targeted reads of a tiny fraction of the data. Every major lakehouse query engine implements predicate pushdown, and every data engineering decision that affects data layout (partitioning, sorting, Z-ordering) is ultimately about making predicate pushdown more effective.