What Is Apache Spark?

Apache Spark is the world's most widely deployed open-source distributed data processing engine. Originally developed at UC Berkeley's AMPLab in 2009 and donated to the Apache Software Foundation in 2013, Spark enables processing of datasets from gigabytes to petabytes by distributing computation across a cluster of machines, with a unified API spanning batch processing, SQL analytics, streaming, and machine learning.

Spark's key design insight was replacing Hadoop MapReduce's disk-based intermediate storage with in-memory processing using resilient distributed datasets (RDDs). This made Spark 10–100x faster than MapReduce for iterative algorithms and interactive queries — transforming the data engineering landscape.

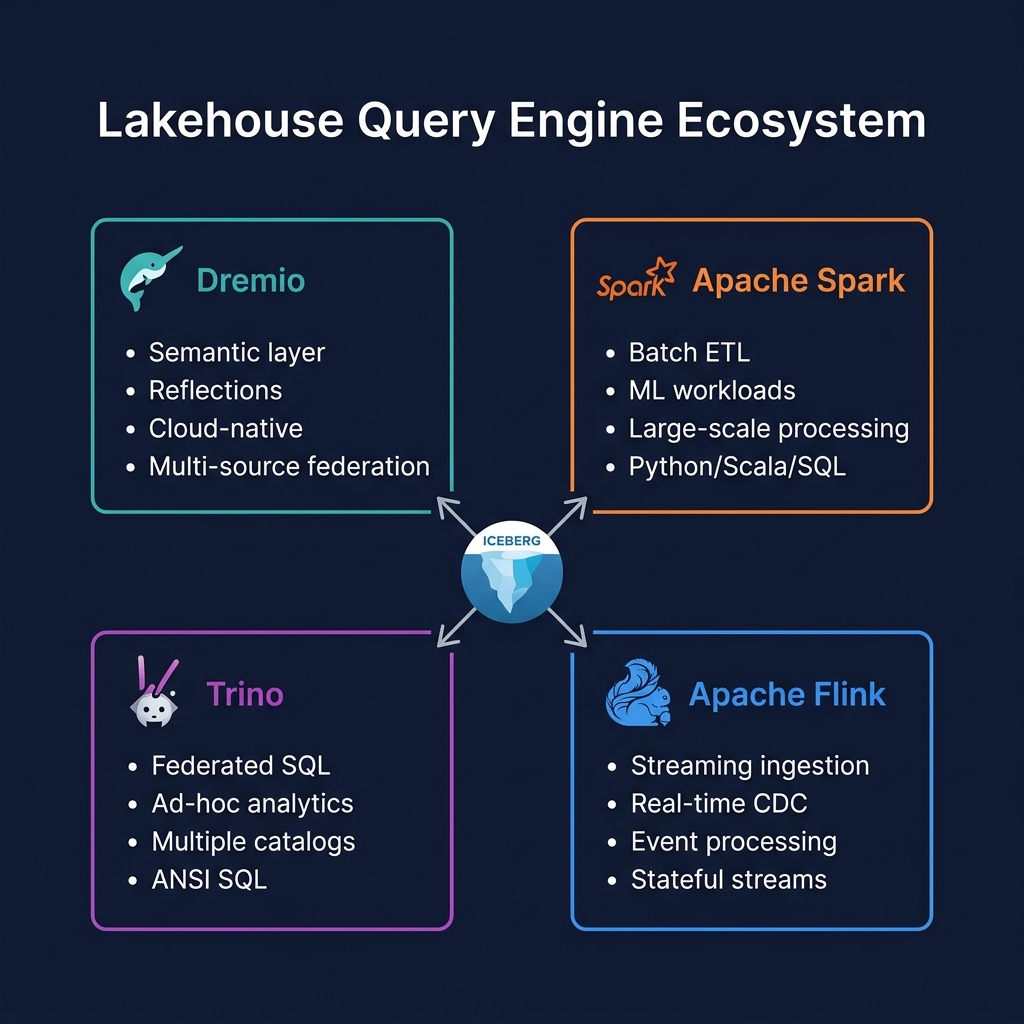

In the 2025 lakehouse ecosystem, Spark occupies a specific, critical role: it is the primary engine for large-scale ETL and ELT transformations, the most feature-complete Apache Iceberg write client, and the backbone of ML feature engineering pipelines. It is the workhorse engine that prepares data, while engines like Dremio and Trino serve that prepared data to analysts.

Spark and Apache Iceberg

Apache Spark has the most complete and mature Apache Iceberg integration of any engine. Spark was the primary development environment for Iceberg during its early years, and Spark + Iceberg remains the most widely used combination for Iceberg table creation and management.

Spark's Iceberg capabilities include: creating Iceberg tables (DDL), reading and writing with full ACID compliance, all V2 DML operations (INSERT, UPDATE, DELETE, MERGE INTO with both CoW and MoR), schema evolution DDL, partition evolution, time travel queries, snapshot management (expire, rollback), compaction (RewriteDataFiles, RewriteManifests), and the full Iceberg stored procedure API (CALL catalog.system.*).

Configuring Spark with Iceberg requires adding the Iceberg Spark extension to the SparkSession: spark.jars.packages org.apache.iceberg:iceberg-spark-runtime-3.5_2.12:1.x.x and setting the SparkCatalog configuration to point to an Iceberg catalog backend (REST, Nessie, Glue, or Hive Metastore).

PySpark for Data Engineering

PySpark — the Python API for Apache Spark — is the dominant interface for data engineering teams working with Spark in the modern lakehouse. PySpark provides:

- DataFrame API: A pandas-like API for distributed data transformation, with lazy evaluation and a query optimizer (Catalyst) that optimizes execution plans

- Spark SQL: Full SQL interface for querying DataFrames and Iceberg tables — the same SQL familiar to analysts but executing on distributed Spark clusters

- ML Pipelines (MLlib): Distributed ML algorithms, feature engineering, and model training on large datasets

- Spark Structured Streaming: Streaming DataFrame API for continuous processing of Kafka, Kinesis, or file streams into Iceberg tables

Spark vs. Dremio for Analytics

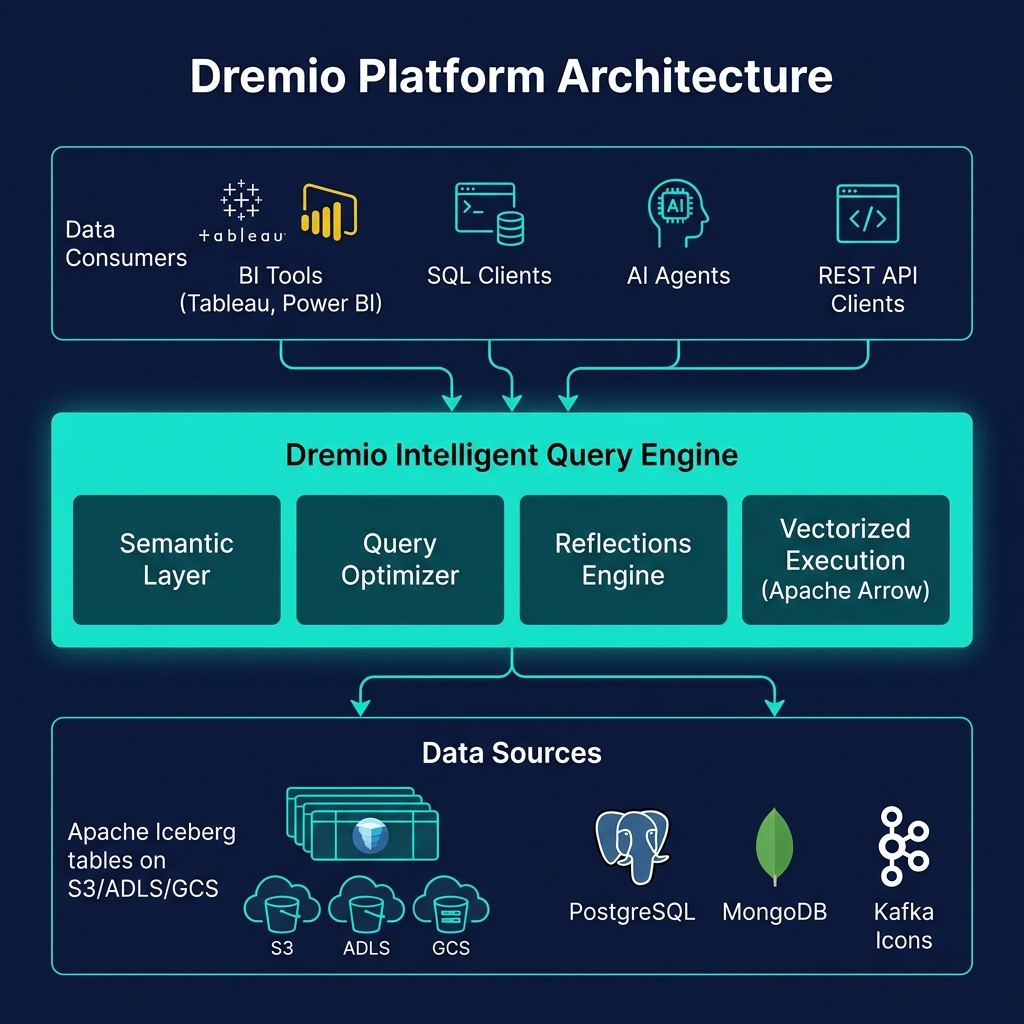

Spark and Dremio serve different analytical needs and are best understood as complementary rather than competing:

| Use Case | Spark | Dremio |

|---|---|---|

| Large-scale batch ETL | ✅ Primary choice | Limited |

| Iceberg table management | ✅ Most complete | ✅ Automated |

| Interactive SQL (sub-second) | Poor (startup latency) | ✅ Reflections |

| BI tool connectivity | Via Spark Connect / JDBC | ✅ ODBC/JDBC/Arrow |

| ML workloads | ✅ MLlib, PySpark | Not applicable |

| Semantic layer | None built-in | ✅ Virtual Datasets |

Summary

Apache Spark is the essential ETL and ML engine of the data lakehouse. Its deep Apache Iceberg integration, massive ecosystem (PySpark, MLlib, Structured Streaming), and proven scalability make it irreplaceable for the data transformation workloads that produce the Silver and Gold Iceberg tables that power analytics. In the multi-engine lakehouse, Spark is the engine that creates and manages data; engines like Dremio and Trino are the engines that serve that data efficiently to analysts and applications.