What Is the Medallion Architecture?

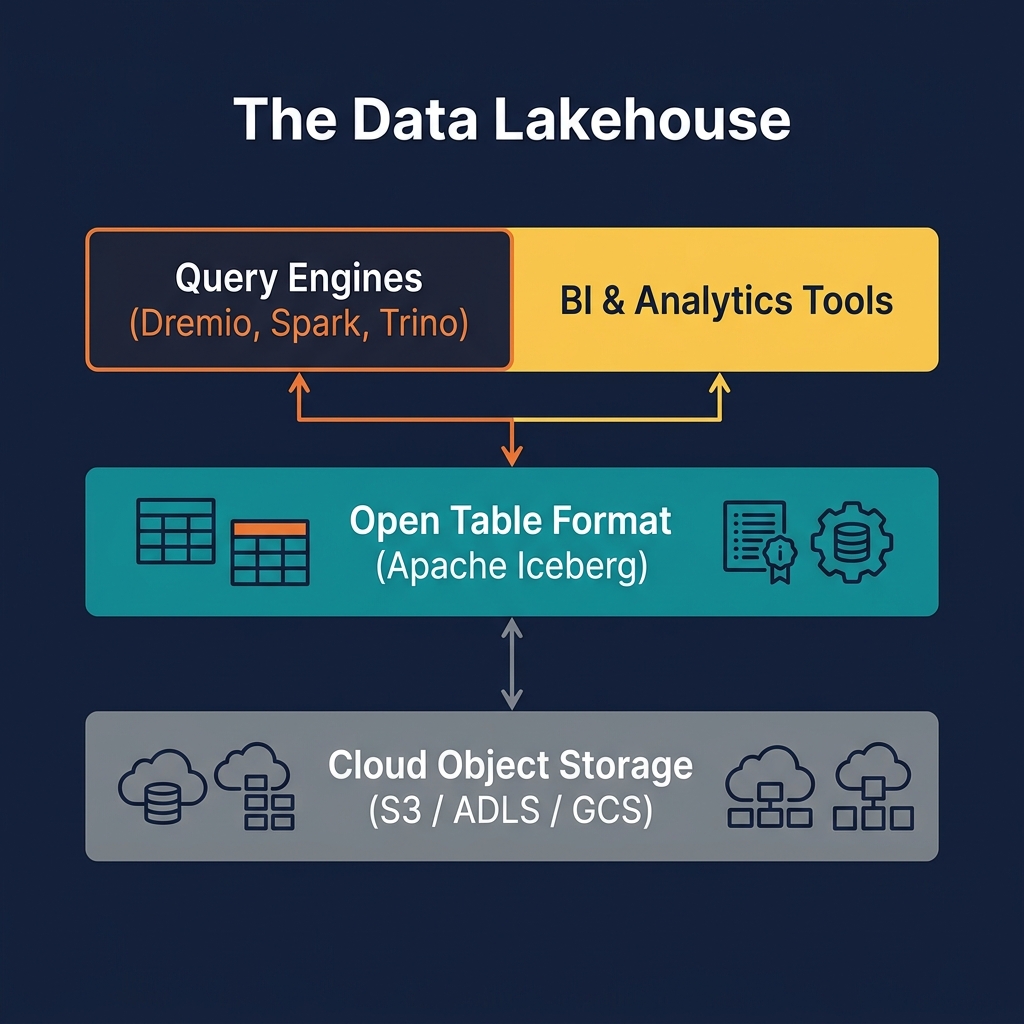

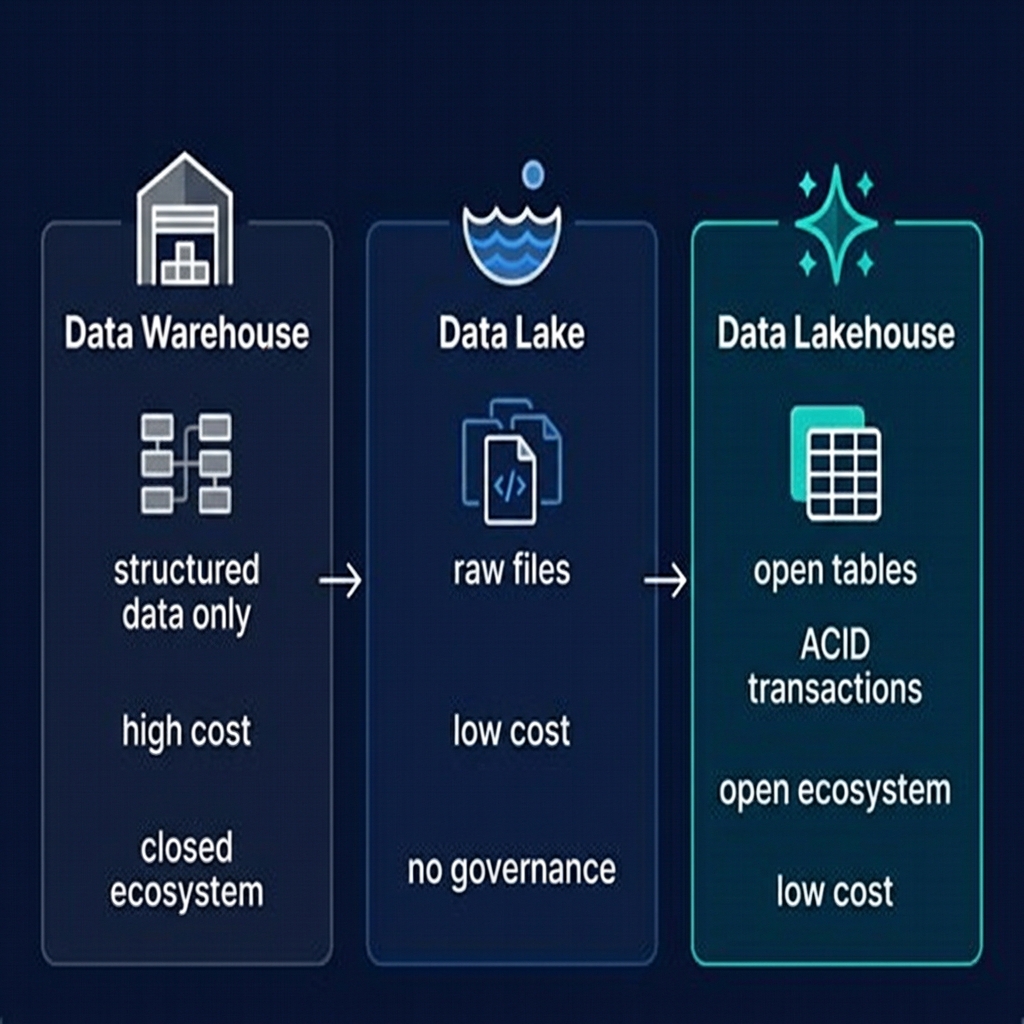

The Medallion Architecture (also called multi-hop architecture or layered lakehouse architecture) is a data organization pattern that structures a data lakehouse into three progressively refined data quality tiers: Bronze, Silver, and Gold. Each tier serves a distinct purpose in the data lifecycle, from raw ingestion to business-ready analytics.

The Bronze layer is the raw landing zone — data arrives here exactly as it was produced by source systems, with no transformation or quality enforcement. The Silver layer applies cleaning, validation, and conforming transformations, producing a reliable, integrated dataset that represents a single version of truth for each business entity. The Gold layer builds business-specific aggregations, dimensional models, and feature sets that are directly consumed by BI tools, dashboards, data scientists, and increasingly, AI agents.

The name "Medallion" is a metaphor: raw ore (Bronze) is refined into a more valuable material (Silver), which is then further refined into the highest-quality, most valuable output (Gold). Each step adds value through quality improvement, and each layer serves different consumers with different needs.

The Medallion Architecture was popularized by Databricks as a Delta Lake best practice but has since become the de facto standard for organizing data across all lakehouse platforms, regardless of table format. It is equally applicable to Apache Iceberg-based lakehouses (where it is the most common organizational pattern), Delta Lake, and Apache Hudi.

Understanding the Medallion Architecture is foundational to understanding how production lakehouses are designed, built, and operated. It is the pattern that separates a mature, well-engineered lakehouse from an ad-hoc collection of files.

The Bronze Layer: Raw Ingestion

The Bronze layer is the first landing point for all incoming data. Its defining characteristic is fidelity to the source: data is stored exactly as it was received, without transformation, filtering, or quality enforcement.

What Goes in Bronze

Bronze tables contain the raw output of ingestion pipelines. For relational database sources, this means complete row-level records — including all columns, even those not needed downstream. For event streams, Bronze holds every event exactly as produced, including duplicates and out-of-order records. For files (CSV, JSON, XML), Bronze stores the parsed content, often with an additional metadata column recording the source file path and ingestion timestamp.

Bronze Table Design

Bronze tables are typically append-only — new records are inserted, never updated in place. This append-only design makes ingestion simple and fast, and it preserves the complete historical record of every record ever received. If a source system sends a corrected version of a record, both the original and the correction land in Bronze; the correction logic is applied in the Silver transformation.

Common additional columns in Bronze tables include: _ingested_at (the timestamp when the record arrived), _source_system (the name of the source), and _source_file (for file-based sources). These audit columns enable data lineage tracing and debugging of downstream quality issues.

Bronze Partitioning

Bronze tables are almost always partitioned by ingestion date — ingested_date or year/month/day. This partition strategy makes it efficient to process only recently arrived data in incremental Silver refresh jobs.

Bronze Access Control

Bronze data is typically restricted to data engineers and automated pipeline processes. Business analysts and data scientists generally do not have direct access to Bronze tables — the raw data may contain sensitive PII, duplicate records, or invalid values that would produce incorrect analysis if queried directly.

The Silver Layer: Cleansed and Conformed Data

The Silver layer is where the real data engineering work happens. Silver tables transform raw Bronze data into a clean, reliable, integrated dataset that represents a single, consistent version of truth for each business entity or domain.

Silver Transformations

The Bronze-to-Silver transformation pipeline typically performs:

- Deduplication: Identifying and removing or collapsing duplicate records from the same or multiple source systems

- Null handling: Replacing nulls with appropriate defaults, flagging records with critical null values, or filtering invalid records

- Type coercion: Converting string-encoded dates, numbers, and booleans to their proper types

- Entity resolution: Matching customer records from CRM and ERP systems to a single canonical customer identity

- Schema conforming: Standardizing column names, date formats, and categorical values across sources (e.g., mapping 'M'/'F'/'Male'/'Female' to a standardized gender taxonomy)

- Business rule application: Calculating derived fields, applying product classification rules, and enriching records with reference data lookups

Silver Table Design

Unlike Bronze, Silver tables support upserts and deletes. When a source system sends a corrected record, the Silver pipeline uses Apache Iceberg's MERGE INTO capability to upsert the correction into the Silver table without rewriting the entire partition. This is where Iceberg's Merge-on-Read or Copy-on-Write strategies are configured based on the read/write frequency trade-off.

Silver tables are often organized by business domain — silver.customers, silver.orders, silver.products — with each table representing a clean, integrated view of a single entity type across all sources.

Silver Access

Silver tables are accessible to data engineers, senior data analysts, and data scientists who need access to clean, integrated data without the constraints of pre-defined aggregations. Most machine learning feature engineering happens at the Silver layer.

The Gold Layer: Business-Ready Analytics

The Gold layer contains the finished data products — the aggregations, dimensional models, and domain-specific datasets that are directly consumed by business users, BI tools, dashboards, and executive reporting.

Gold Table Design Patterns

Gold tables are often designed following dimensional modeling conventions — fact tables and dimension tables in a star or snowflake schema. Common Gold table types include:

- Aggregate tables: Pre-aggregated metrics by date, region, product, and other common dimensions — e.g., daily sales by product category and store

- Slowly Changing Dimension (SCD) tables: Dimension tables that track how attribute values change over time — e.g., customer address history, product price history

- Wide, denormalized tables: Denormalized tables that pre-join the fact and all relevant dimensions into a single wide table, eliminating join overhead at query time

- Feature tables: Computed ML features for model training and inference — e.g., customer 30-day purchase frequency, product demand signals

Gold Performance Optimization

Gold tables receive the most intensive performance optimization of any layer:

- Compaction: Merging small files into optimally sized Parquet files for efficient sequential reads

- Z-Ordering: Co-locating frequently filtered columns within data files to maximize data skipping

- Dremio Reflections: Transparent materialized views that make sub-second query response possible for complex aggregations on large Gold tables

Gold Access

Gold tables are the primary interface for business analysts and BI tools. They are connected to Tableau, Power BI, Looker, and other visualization tools via Dremio's ODBC, JDBC, or Arrow Flight connectors. Access control at the Gold layer is typically the strictest — only specific roles can see specific tables or columns.

Pipeline Design: Bronze to Silver to Gold

The pipelines that move data between Medallion layers follow a consistent pattern: incremental processing, idempotent execution, and observable quality metrics.

Incremental Processing

Production Medallion pipelines process only new or changed data in each run, not the entire dataset. In Apache Iceberg, this is achieved using incremental snapshot reads — the pipeline tracks the last processed snapshot ID and reads only the records added since that snapshot. This makes pipelines fast and economical regardless of how large the total dataset grows.

Idempotent Execution

Pipeline runs must be idempotent — running the same pipeline twice on the same input must produce the same output. This property is essential for recovery from failures: if a pipeline run fails partway through, it can be safely restarted from the beginning without producing duplicate records. Iceberg's ACID semantics make idempotent writes straightforward — a failed transaction leaves no partial state behind.

Data Quality Gates

Between each layer, data quality checks serve as gates. Before Silver tables are marked as ready for consumption, the pipeline validates: null rates below threshold, record counts within expected range, no new unexpected categorical values, referential integrity between related tables. Failed quality checks halt the pipeline and trigger alerts.

Orchestration

Medallion pipelines are orchestrated by tools like Apache Airflow, Prefect, or Dagster. Each layer's pipeline is a DAG (Directed Acyclic Graph) of tasks, with dependencies between layers enforced — Silver jobs cannot run until Bronze is confirmed fresh, Gold jobs cannot run until Silver is confirmed clean.

Medallion Architecture and Apache Iceberg

The Medallion Architecture and Apache Iceberg are designed for each other. Iceberg's features map directly to the needs of each layer:

Bronze + Iceberg

Iceberg's efficient append operations and partition evolution make Bronze ingestion fast and flexible. As data volume grows, partitioning strategies can be updated without rewriting historical data. Time travel allows engineers to investigate what data existed in Bronze at any point in the past — essential for debugging quality issues.

Silver + Iceberg

Iceberg's MERGE INTO support and row-level delete capabilities make Silver upsert pipelines efficient. Schema evolution allows new source fields to be added to Silver tables without breaking downstream queries. Iceberg's snapshot isolation ensures that Silver readers always see a consistent state, even while pipelines are updating Silver tables.

Gold + Iceberg

Compaction and Z-ordering at the Gold layer are native Iceberg operations, ensuring optimal file layout for BI query patterns. Dremio's Automated Table Optimization can manage these operations automatically, keeping Gold tables performing at peak efficiency without manual DBA intervention.

Medallion Architecture with Dremio

Dremio is particularly well-suited to the Medallion Architecture because it provides value at every layer:

Bronze Data Exploration

Data engineers can use Dremio to interactively explore Bronze tables — running SQL queries to inspect raw records, check null rates, and identify quality issues — without writing Spark jobs. Dremio's ability to query Iceberg tables without pre-warming or configuration makes it an excellent exploratory tool for raw data.

Silver Data Curation

Through Virtual Datasets, Dremio allows data engineers to define Silver-layer views on top of Bronze data — joins, filters, type casts — as SQL views without physically writing transformed data. This is ideal for exploratory curation work before committing to a full pipeline implementation.

Gold Data Serving

Dremio's primary role in the Medallion Architecture is serving Gold data to BI tools and analysts. Reflections — Dremio's materialized acceleration layer — can be configured on Gold Iceberg tables to provide sub-second query response times for complex aggregations, enabling interactive dashboard performance on petabyte-scale data.

Automated Optimization

Dremio's Automated Table Optimization automatically compacts, clusters, and vacuums Gold Iceberg tables without requiring manual scheduling or Spark jobs. This dramatically reduces the operational overhead of maintaining Gold table performance.

Streaming Medallion Architecture

The traditional Medallion Architecture uses batch processing between layers. A streaming variant — increasingly common in modern lakehouses — applies the same Bronze/Silver/Gold quality tiers but moves data between them via continuous streaming pipelines rather than scheduled batch jobs.

Streaming Bronze Ingestion

In a streaming Medallion, events from Apache Kafka or other message brokers are continuously appended to Bronze Iceberg tables using a streaming writer — Apache Flink, Spark Structured Streaming, or a managed connector. Events arrive in Bronze within seconds of being produced by source systems.

Streaming Silver Processing

A continuous Apache Flink job reads Bronze events, applies deduplication and transformation logic, and writes cleaned records to Silver Iceberg tables. Flink's exactly-once semantics ensure that each event is processed exactly once, even in the presence of failures.

Latency Trade-offs

Streaming Medallion architectures achieve near-real-time data freshness — data can be available in Gold within minutes of being produced in a source system. The trade-off is operational complexity: streaming jobs require more careful monitoring, failure handling, and resource management than batch jobs. For use cases where data freshness is critical — fraud detection, real-time dashboards, operational analytics — the complexity is justified. For most reporting workloads, nightly or hourly batch processing is sufficient.

Common Mistakes in Medallion Architecture Implementation

The Medallion Architecture is conceptually simple, but production implementations frequently encounter the following pitfalls:

Over-engineering Bronze

A common mistake is applying complex transformations in the Bronze layer — schema conforming, business rule application, enrichment. Bronze should be maximally simple: land the raw data, nothing more. Complexity in Bronze makes debugging difficult and loses the benefits of having a pristine historical record.

Too Many Layers

Some teams add additional layers — Platinum, Diamond, or domain-specific staging layers — creating a pipeline so deep that data latency becomes unacceptable and debugging requires tracing through five or six transformation stages. Unless your organization has genuinely complex multi-stage requirements, stick to three tiers.

Inconsistent Upsert Patterns

Teams that use different upsert strategies (full refresh, append-only, MERGE INTO) inconsistently across Silver tables create fragile, unpredictable data products. Standardize on a single upsert pattern per entity type and document it clearly.

Neglecting Compaction at Gold

Gold tables accumulate small files from incremental loads. Without regular compaction, query performance degrades as engines must open thousands of small files. Dremio's automated optimization or scheduled Spark compaction jobs are essential for Gold layer health.

Skipping Data Quality Gates

The temptation to skip data quality checks between layers to simplify pipelines is strong — but it means that bad data silently propagates through all three layers and surfaces only when a business stakeholder reports an incorrect dashboard. Quality gates at each layer transition are non-negotiable in production systems.

Summary

The Medallion Architecture is the organizational backbone of every well-engineered data lakehouse. By separating raw ingestion (Bronze), data cleansing (Silver), and business analytics (Gold) into distinct, progressively refined layers, it creates a system that is observable, debuggable, and resilient to the inevitable messiness of real-world data.

Apache Iceberg provides the transactional foundation — MERGE INTO for upserts, snapshot isolation for concurrent access, time travel for debugging — that makes the Medallion Architecture practical at scale. Dremio provides the query and serving layer that makes Gold data instantly available to business users with sub-second performance.

Teams adopting the lakehouse for the first time should implement the Medallion Architecture from the start — it is far easier to establish the right organizational patterns early than to retrofit them onto an existing collection of unorganized files and tables. The discipline it imposes pays dividends in data quality, operational reliability, and team productivity throughout the life of the data platform.