What Is Decoupled Storage and Compute?

Decoupled storage and compute is the architectural principle that the system responsible for storing data and the system responsible for processing that data should be independent, separately scalable, and separately priced components of the overall data platform.

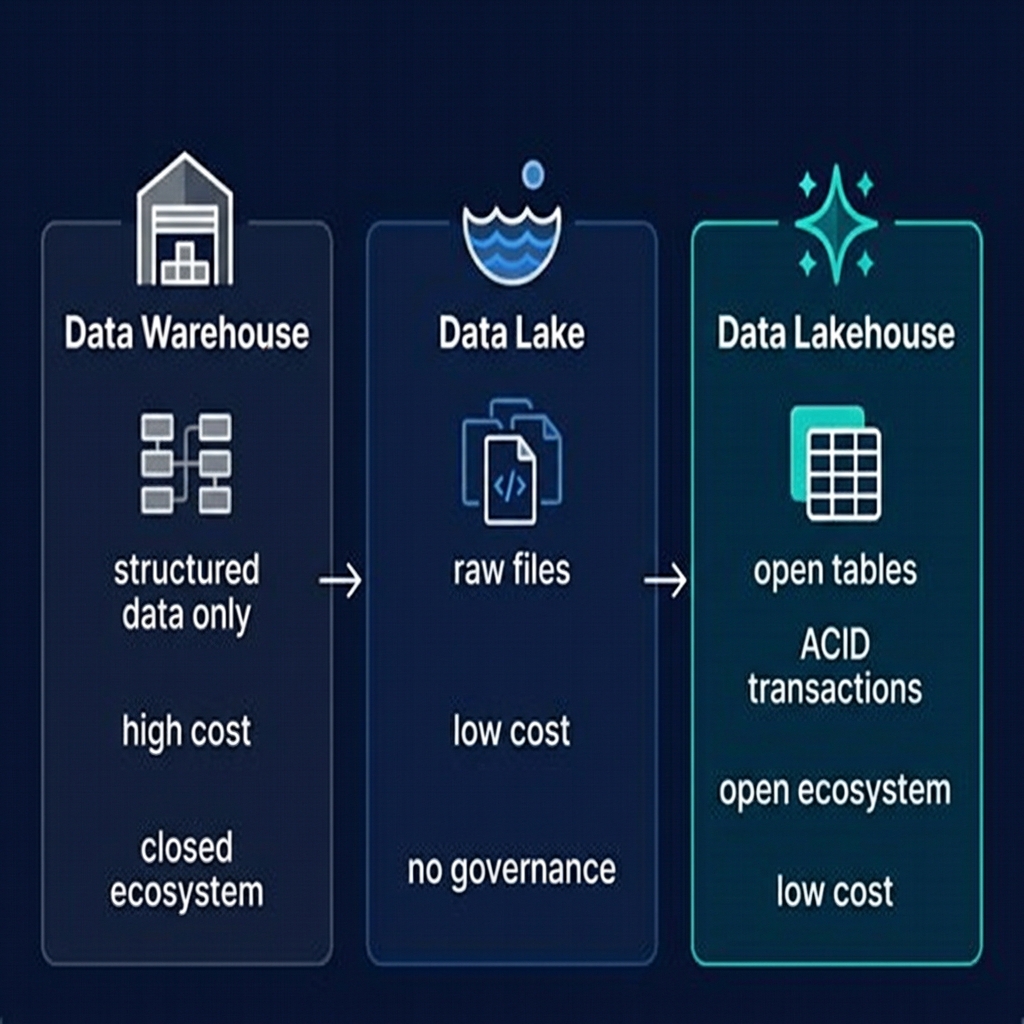

In a coupled architecture — like a traditional on-premises data warehouse or a relational database — storage and compute are bound together in the same physical infrastructure. To store more data, you buy more servers. To process queries faster, you add more of the same servers. You cannot scale compute without also scaling storage, or vice versa. During periods of low query activity, you are paying for idle compute capacity. During periods of high query activity, you may be constrained by the storage capacity of your compute nodes.

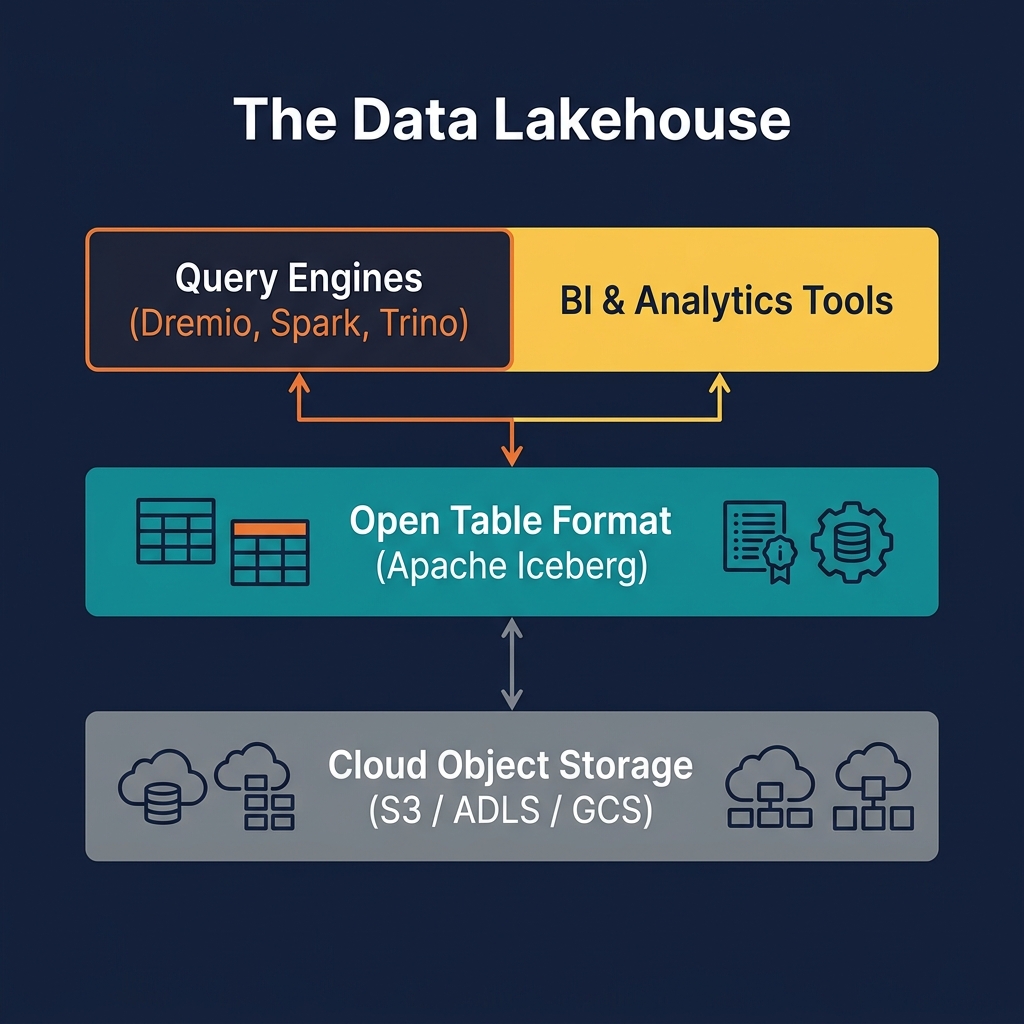

In a decoupled architecture — like the modern data lakehouse — data is stored separately in cloud object storage (Amazon S3, Azure ADLS, GCS), and compute engines (Dremio, Apache Spark, Trino) connect to that storage over the network to process queries. Storage scales independently as data grows — you pay only for the bytes stored. Compute scales independently as query demand changes — you spin up large clusters for heavy workloads and shut them down when idle.

This decoupling is not just an operational convenience — it is a fundamental architectural shift that enables the multi-engine interoperability that defines the lakehouse. Because data is stored in open formats in neutral object storage, any engine can access it — not just the vendor that sold you the compute platform.

The History: From Coupled to Decoupled

The path from coupled to decoupled architectures is the story of how the economics of computing changed over the past two decades.

The Coupled Era: On-Premises Warehouses

Traditional on-premises data warehouses — Teradata, Oracle Exadata, IBM Netezza — were shared-nothing MPP systems where each node held both storage and compute. Adding capacity meant adding nodes, each with both disks and CPUs. These systems delivered excellent performance for their time, but the coupling meant that storage and compute scaled together — even if you needed more query capacity but had plenty of storage, you had to buy storage anyway.

The First Decoupling: Hadoop HDFS

Apache Hadoop introduced the first large-scale decoupling experiment in enterprise data processing. HDFS (Hadoop Distributed File System) was a networked file system across commodity hardware, and MapReduce compute jobs ran separately. In theory, compute could be scaled independently. In practice, HDFS still collocated compute and storage on the same nodes for data locality — moving data over the network was expensive with 10GbE connections.

The Cloud Revolution: True Decoupling

The rise of cloud object storage — S3 (2006), GCS (2010), ADLS (2015) — and high-speed networking (25GbE, 100GbE) made true decoupling practical. With 100Gbps network connections, reading data from remote object storage became fast enough that data locality was no longer a performance requirement. Compute engines could run on separate virtual machines, reading from S3 at network speeds that rivaled local disk reads.

Snowflake's 2012 architecture was a landmark: completely decoupled storage (in S3) from compute (virtual warehouses). This architecture proved that a cloud data warehouse could deliver excellent analytical performance without collocating storage and compute — and the entire cloud data platform industry followed.

How Decoupled Storage and Compute Works

In a decoupled lakehouse architecture, the data path from storage to query result has several components:

Storage Layer: Cloud Object Storage

Data lives in cloud object storage as immutable files — primarily Apache Parquet for tabular data. Object storage provides 11-nines (99.999999999%) durability, essentially unlimited capacity, and very low per-GB pricing. The storage layer is completely passive — it does nothing with the data except store it durably and serve file read requests.

Table Format Layer: Apache Iceberg

Apache Iceberg sits logically between storage and compute. It maintains metadata — manifest files and snapshot logs — that tell compute engines which files belong to which table, what schemas those tables have, and what the current committed state of each table is. This metadata layer is what enables concurrent, safe access from multiple compute engines simultaneously.

Catalog Layer

The Iceberg REST Catalog (implemented by Apache Polaris, Project Nessie, or cloud-managed catalogs) provides a central registry that maps table names to their current metadata location. When a compute engine wants to query a table, it asks the catalog where the table's metadata is, then follows the metadata pointers to find the actual data files.

Compute Layer: Query Engines

Query engines — Dremio, Spark, Trino, Flink — connect to the catalog to discover tables, read Iceberg metadata to plan query execution (identifying which files are relevant, which partitions to prune), and then read only the necessary Parquet files from object storage. The engine's compute nodes apply vectorized execution, aggregation, and filtering in memory and return results to the user.

Benefits of Decoupled Storage and Compute

Decoupling delivers concrete, measurable benefits across cost, flexibility, and resilience:

Independent Scaling

Storage and compute scale on entirely different curves. Data volume grows linearly over time — you can predict next year's storage cost fairly accurately. Query demand is spiky — heavy during business hours, light overnight and on weekends. In a decoupled architecture, you provision compute for peak demand and scale it back or shut it down when demand drops. Storage costs remain fixed regardless of compute scaling decisions.

Multi-Engine Interoperability

Because data is in neutral object storage in open format, any compliant engine can read it. Your data engineering team can use Apache Spark for batch ETL. Your data science team can use Python with PyIceberg for ML feature engineering. Your BI team can use Dremio for interactive SQL. Your streaming team can use Apache Flink for real-time ingestion. All from the same Apache Iceberg tables, simultaneously, without copying data.

Cost Efficiency

Cloud object storage costs approximately $0.023/GB/month for standard S3 tiers. A proprietary cloud data warehouse stores data at 5–15x that cost. At petabyte scale, this difference is worth millions of dollars per year. Decoupling eliminates the proprietary storage tax.

No Vendor Lock-In

When your data lives in open format in object storage you own, changing compute vendors becomes a configuration change, not a data migration. If a better query engine emerges, you adopt it without moving data. This shifts pricing power from the vendor to the customer.

Disaster Recovery and Multi-Region

In a coupled architecture, disaster recovery requires replicating both storage and compute. In a decoupled architecture, object storage replication (S3 Cross-Region Replication, for example) is sufficient — because compute is stateless, it can be provisioned in any region that has access to the replicated storage.

Decoupled Storage and Compute vs. Coupled Architectures

The performance and cost trade-offs between coupled and decoupled architectures have shifted dramatically as network speeds have improved:

| Dimension | Coupled (Traditional DW) | Decoupled (Lakehouse) |

|---|---|---|

| Storage cost | $0.20–$0.50/GB/month | $0.023/GB/month (S3 std.) |

| Compute cost | Always-on, fixed | Pay-per-query or auto-scaling |

| Engine flexibility | Single vendor only | Any Iceberg-compatible engine |

| Data format | Proprietary | Open (Parquet + Iceberg) |

| Multi-engine access | ❌ Not possible | ✅ Yes (simultaneous) |

| Read performance | Fast (local disk) | Excellent (100GbE + metadata optimization) |

| Write performance | Fast (local disk) | Good (Iceberg commit protocol) |

| Operational simplicity | Single system to manage | Multiple components (catalog, engine, storage) |

The main trade-off of decoupling is operational complexity — more components to configure, monitor, and maintain. Purpose-built lakehouse platforms like Dremio significantly reduce this complexity by integrating the catalog, query engine, and management layer into a cohesive product.

Challenges of Decoupled Architectures

Despite its benefits, decoupled architecture introduces challenges that practitioners must design for:

Network Latency

Reading data from object storage over the network introduces latency compared to reading from local disk. Modern solutions mitigate this through aggressive predicate pushdown (reading fewer files), column pruning (reading fewer bytes), local SSD caching (Dremio caches frequently accessed Parquet files on local NVMe), and vectorized I/O (reading data in large, sequential chunks). In practice, well-optimized lakehouse queries on properly maintained Iceberg tables match or exceed warehouse query performance.

The Small File Problem

Frequent small writes — common in streaming ingestion scenarios — produce many small Parquet files. Reading thousands of small files from object storage involves high latency overhead (one HTTP request per file). Compaction — merging small files into optimally sized ones (typically 128MB–512MB) — is the solution. Dremio's automated table optimization handles this transparently.

Consistency Across Engines

When multiple engines write to the same table concurrently, ensuring consistency requires a robust transaction protocol. Apache Iceberg's optimistic concurrency control — where each writer atomically swaps the current table metadata pointer — provides serializable isolation between writers without requiring a distributed lock manager.

Catalog as a Single Point of Failure

The catalog is the central coordinator of the decoupled architecture — if it is unavailable, engines cannot discover or access tables. High-availability deployment of the catalog (multiple replicas, health checks, automatic failover) is essential for production lakehouses.

Dremio and Decoupled Storage and Compute

Dremio is architected specifically for the decoupled storage and compute paradigm. Every aspect of its design assumes that data lives in open format on cloud object storage, and that Dremio's role is to provide the fastest, most efficient compute layer on top of that open storage.

Intelligent Query Engine

Dremio's query engine is built on Apache Arrow for vectorized in-memory processing and uses Iceberg metadata aggressively to minimize the data scanned from object storage. Its predicate pushdown capability evaluates filter conditions against Iceberg file-level statistics, skipping files that cannot contain relevant data before issuing any storage read requests.

Reflections: Compute-Side Acceleration

Dremio Reflections are a form of compute-side caching: pre-computed, materialized representations of Iceberg data, stored as optimized Iceberg files and automatically selected by the query optimizer. Reflections live in storage (they are Iceberg tables themselves), but the decision to use them is made at compute time by Dremio's optimizer — perfectly aligned with the decoupled model.

Open Catalog Integration

Dremio's Open Catalog implements the Iceberg REST Catalog specification, meaning any Iceberg-compatible engine can connect to it — not just Dremio. This is the embodiment of decoupled architecture: one open catalog, many compute engines, all operating on the same shared storage.

The Future: Serverless and Elastic Compute

The logical endpoint of decoupled storage and compute is serverless query execution: compute that is provisioned per-query, scales from zero in milliseconds, and costs nothing when no queries are running. Cloud services like AWS Athena, Google BigQuery, and Dremio Cloud are moving toward this model.

In a fully serverless lakehouse, there is no "always-on" compute infrastructure. A query submitted at 3 AM provisions the necessary compute, executes against Iceberg tables in S3, returns results, and the compute evaporates — all in seconds. The only persistent cost is the storage of data and metadata in object storage.

This model is ideal for workloads with unpredictable or highly variable query volumes — it eliminates the need to provision for peak demand and pay for idle capacity during off-peak hours. For steady-state, high-concurrency workloads, dedicated compute pools remain more economical.

The decoupled architecture makes this evolution possible: because compute and storage are already fully independent, adding serverless execution is an operational change to the compute layer, not a fundamental architectural redesign. Organizations that adopted the decoupled lakehouse model early are positioned to take advantage of serverless compute advances as they mature.

Best Practices for Decoupled Lakehouse Design

Organizations implementing a decoupled storage and compute architecture should follow these proven best practices:

- Choose object storage in the same cloud region as your compute. Cross-region data transfer introduces latency and egress cost. Keep storage and compute in the same region.

- Right-size files for read performance. Target 128MB–512MB Parquet files. Smaller files hurt read performance; larger files hurt partial reads. Automate compaction to maintain this range as data accumulates.

- Cache hot data in compute-local SSD. Dremio and other engines support caching frequently accessed Iceberg files on local NVMe storage, dramatically reducing cold-read latency for repeated query patterns.

- Deploy the catalog with high availability. The catalog is the only stateful component in the decoupled architecture. Deploy it with at least two replicas and configure health checks and automatic failover.

- Use the Iceberg REST Catalog specification. Choosing a catalog that implements the standard REST spec ensures compatibility with all future compute engines, protecting your metadata investment.

- Monitor storage I/O, not just compute. In decoupled architectures, query slowness is often caused by excessive file reads (poor partitioning or missing compaction) rather than compute bottlenecks. Monitor bytes scanned per query as a key performance metric.

Summary

Decoupled storage and compute is the architectural principle that makes the data lakehouse possible — and economically superior to traditional coupled architectures at scale. By separating data persistence in cheap, open cloud object storage from data processing in flexible, elastic compute engines, the lakehouse delivers independent scalability, multi-engine interoperability, dramatic cost reduction, and freedom from vendor lock-in.

Apache Iceberg is the metadata layer that makes decoupling safe at scale — its transaction protocol ensures that multiple compute engines can safely read and write the same tables concurrently. Apache Polaris and Project Nessie provide the open catalog layer that coordinates engine access. And Dremio provides the intelligent, high-performance compute layer that queries decoupled lakehouse data with sub-second latency.

For any organization evaluating data platform architecture in 2025 and beyond, decoupled storage and compute is not an optional design choice — it is the baseline assumption of every modern data platform.